AI UI

Thought Experiments on the Future

AI is changing everything. From smarter search to AI tutors to robot call centers to chatbots everywhere… everyone in tech has to be thinking:

Awesome! But… what’s next?

Robot servants? Cortana girlfriends? President Siri??

But I digress. Elon Musk recently appeared on the Joe Rogan podcast and proclaimed there will be no apps in 5 years:

I’m not quite so futuristic. Just because we can do something, doesn’t mean that we will.

I.e. some people may go all in on AI, but I feel that the majority of people will not.

Take, for example, the popularity (or, rather, lack thereof) of “Hello Google.” This feature has been available for over 9 and a half years. Google proudly reports 91.9 million active users ATTOW, and this may seem like a lot (and, in some ways, it is) but consider that there are between 3.5 and 4.5 billion Android users right now. That means it’s only used by ~2.3% of all Android users.

Underwhelming, to say the least.

But why so few?

No doubt - it’s a great and powerful feature. But (generalizing from my perspective to the common case) I feel like many users are not okay with having their microphone on 24/7 for the sake of a few seconds of convenience, even if they use the feature several times a day.

Sometimes what is ‘best’ or ‘most convenient’, on paper, isn’t so in practice; things like privacy, security, and the comfort and familiarity of legacy usage often take precedence.

That being said - what will applications be like in this new Intelligence Age?

I think the answer is somewhat nuanced, in that it depends on the specific use case.

The obvious answer is that apps will be smarter, more convenient, and more customized to the end user.

We’re already seeing this play out with chatbot assistants on most major websites, and just how much more useful and efficient an AI-enabled Google search can be.

But what about the other use cases?

Consider the top eleven websites in the world, ranked by web traffic:

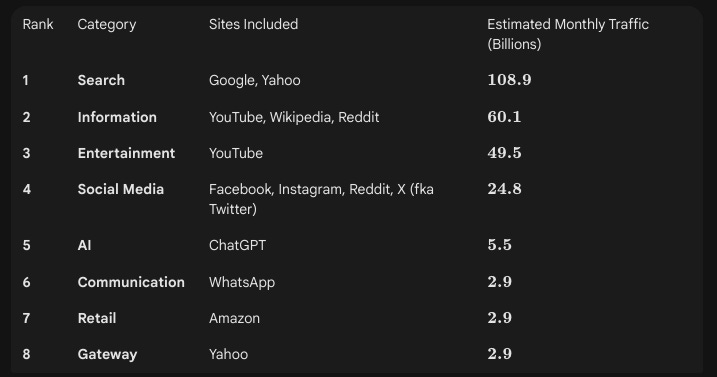

Now if we rank the categories alone by estimated web traffic:

We get a clearer picture of the most popular use cases that exist on the world wide web.

Let’s consider how AI is currently being used in each of them…

Search, Gateway, Information & AI

Google has a very effective AI integrated search. AI can search hundreds of sites in parallel, in seconds, and collate and summarize the results as quickly as it serves up the search results that we have already come to know and love. You can then dig deeper - go to any of its referenced links yourself, or just have a conversation with the AI about the given topic.

Entertainment, Social Media & Retail

Recommendation engines! YouTube does this seamlessly by always seeming to know what video we might want to watch next. Amazon does it more explicitly, with its Customers who bought this also bought and Customers who viewed this also viewed product carousels. The latter can be considered the simplified single product case, whereas YouTube must consider multiple products. It looks at our entire watch history (heavily rated towards what we have watched most recently) as well as many other factors, including our Google search history, the time of day, our geographic location, videos we’ve liked, disliked and commented on, and other user engagement metrics like click-through rate, watch time and average duration (i.e. how much of the video you and other users have watched, as a metric to gauge user satisfaction) as well as (obviously) video metadata like its title, description, tags, and content type (i.e. is it a long-form video, a short, or a live stream).

Communication

Here, again, Google is leading the way; note that Gmail is consistently a top 40 site by worldwide traffic. It currently offers a compelling autocomplete (Smart Compose and Smart Reply), smart search, some light google docs (excel / powerpoint / etc) integration, as well as some third party hookups including Grammarly and Superhuman Mail (the virtual email assistant you probably never asked for - it can automate inbox management, draft emails, summarize threads, and execute workflows).

WhatsApp integrated Meta AI in April 2024; you can chat with it directly like any other AI, and also use it in other (including group chats) to generate text, generate and edit images, summarize, perform real-time translation, and (in future) it will be voice enabled and will be able to provide context for images - e.g. what plant is this? or where was this photo taken?

Great. Awesome. Wonderful.

But what about the future?

Consider how the crew of the Starship Enterprise talks to the ship’s computer. Or, similarly, how you would give commands to a super smart, super fast, personal assistant, who was an expert in almost everything. This is where AI is taking us. This is the future of UI.

That is, for the majority of users, traditional UI will be an antipattern. UI will be either a command line interface, for privacy, or merely voice input, with traditional UI appearing as the verification or confirmation step - e.g. this is what you’re about to pay for - click here to confirm.

However, traditionalists (some may call them luddites) will cling to the existing WIMP interface.

Consider how many people are using AI right now. Forbes cites a study that estimates that 66% of people use AI regularly - but this statistic seems very much overstated. This study, conducted by the University of Melbourne, surveyed 48,384 people across 47 countries. However, the countries themselves were selected based on their “leadership in AI activity and readiness.” I.e. the sample itself was skewed towards an AI-friendly population!

For a perhaps more accurate AI usage statistic: the current world population sits at roughly 8.3 billion people, but 2.2 to 3 billion of them lack the capability (the hardware or connectivity) to even use AI. This means there are between 5.3 and 6.1 billion potential users of AI. Resourcera reports that 900 million people are using AI. This is ~10.8% of the world’s total population, or ~14.75 - 17% of all AI-capable users.

Less than one in five.

Just because someone can AI, doesn’t mean that they will.

That same University of Melbourne study reported that (even among the AI-friendly countries in its sample size) “only 46% of people globally are willing to trust AI systems.”

Legacy computer UI, the WIMP (Windows Icon Menu Pointer) interface is alive, and IMHO, it always will be.

For clarity, consider the 4 major epochs of computer UI:

the command line

WIMP (aka Legacy UI)

Bridge UI - AI integrated into (and often merely tacked onto) the existing WIMP interface; this is where we are today

Future UI - AI all the things!

Going forward, within a few years, I believe that companies will need to provide two user interfaces: the legacy WIMP interface (with perhaps the option of integrated AI features - essentially making it Bridge UI), and the AI-only command line / voice UI, where the rendered UI of a legacy WIMP interface is merely a verification or confirmation step in the checkout or information retrieval process. The latter for power users, the former for traditionalists.

While I do expect the number of AI users to grow, not everyone will fully embrace AI. Not everyone is efficiency mad or results driven all the time. Some people like scrolling. Some people enjoy taking their time to shop for this or that or to browse through the possibilities or to just follow their nose and see where their web journey will take them.

But what do you think? Where is AI headed? What will UI look like in the future? Where do your preferences lie?

Now is our chance to shape the future.

Inspired by The CTO Substack "What if we're building AI for the wrong decade?" (https://ctosub.com/p/what-if-were-building-ai-for-the) where Etienne de Bruin, founder of 7 CTOs, poses a fundamental, critical question - essentially, why are we shoehorning AI, an infinitely capable technology, into a computer interface that is over 50 years old?

(Etienne was the first CTO I ever had, back at Monk Development in Old Town San Diego. I had no idea how lucky I was!)